First published on the App Signal blog

The single most important rule of testing is to do it.

Kernighan & Pike, The Practice of Programming, 1999

Despite constantly changing technologies and the needs of customers, some wisdom seems eternal. Programmers need to test their code.

But thorough testing takes time. When we do it well, everything works, and a massive testing effort feels like a waste. However, when we do it badly, our code is often broken, and we wish that we had done better testing.

I have some good news for you. Testing doesn’t have to be arduous and we can still get good results. Part of it comes down to our attitude: come at it the right way and it will be much easier.

Another part of it is the techniques we use. In this blog post, I’ll show you some testing techniques (with code examples on GitHub) that can deliver much more bang for your buck.

Why Test?

We test our code to ensure that it works. I know, it seems obvious.

But that’s not enough. To be more effective, we must catch bugs early. The earlier we find them, the cheaper they are to fix. When a bug goes out to production and is found by a customer, then it’s a whole lot more expensive to get it reported, reproduced, and handed off to a developer — and they probably then have to spend time loading the mental context they need to find and fix it.

Testing enables refactoring. Evolution allows good design to emerge naturally through repeated refactoring and simplification, but we can’t do that safely without testing.

The best reason for testing is that it allows us to see things from our customer's perspective. This is one of the most important perspectives we can take, helping us see the bigger picture around our code.

My Fundamental Rule of Development

I don’t have many hard and fast rules, but this one is inviolable:

Keep your code working.

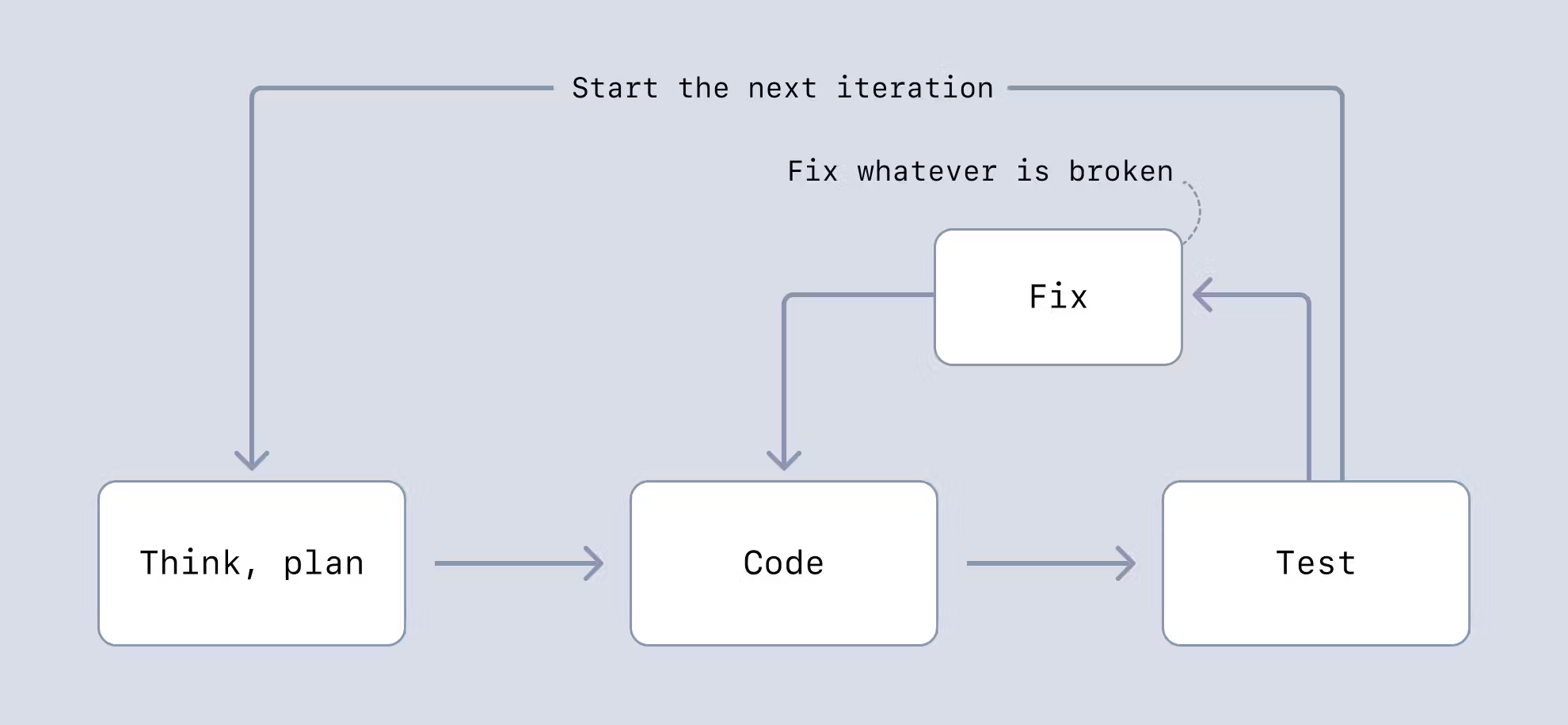

This is a mantra I repeat to myself while coding. Coding becomes a game where I take code through a series of iterations, going from working code to working code, then testing and making it work again. And so it goes. The sum total is a large amount of working and reliable code.

I’ve been writing code for a long time. In the early years of my career, I was arrogant enough to assume that my code would come out working and was often surprised when it didn’t.

In the later part of my career, I’ve turned that assumption around: now, I think that most code comes out broken. Going further, I believe that the natural state of code is being broken. There are so many more ways code can be broken than for it to accidentally work.

When Should We Test Our Code?

We should test our code early and often.

Testing frequently means problems don’t build up and snowball into bigger problems. It creates the fast feedback loop we need to stay on track and be agile.

Testing early means we don’t delay the discovery of problems. We should aim for a small distance between coding and testing. The less we have to test, the easier it is to test, and the less chance that unnoticed bugs can creep into our code.

We should make frequent commits to our code repository. I like to think of this as putting working code in the bank. If, at any point, I run into a mess, I can reset my working code to the last commit — which I know is working, because I only commit working code. I can abandon my work in progress at any time without losing much effort. Aiming for small commits means I never have much work to lose.

Manual Testing vs. Automated Testing

I’ve been a developer for over 26 years, and I’ve probably spent more time doing manual testing than automated testing. So I know for sure that there’s nothing inherently wrong with manual testing and I still often do it, even when I do later follow it up with automated testing.

Manual testing can get us a long way. Except that repeating the same manual tests over and over again is tedious and time-consuming, not to mention prone to mistakes and laziness (you never forget to test right?).

When we write an automated test for a feature or a piece of code, we get all future testing of that feature for free. For this reason, automated testing can be worthwhile, but it will only pay for itself over the long haul.

Automated testing allows us to scale up our testing. A single developer has the potential to conduct vastly more tests than they could ever achieve manually. Of course, this assumes that a massive effort has already been expended to build the automated tests in the first place.

Because automated testing is automatic, it means we won’t be tempted to skip or forget testing. It also enables more frequent testing, which, as discussed, is crucial to getting fast feedback and keeping our code working.

Running a suite of automated tests gives us immediate confidence in our code, which is priceless and hard to achieve with manual testing.

Even after espousing the benefits of automated testing, I can assuredly say that not all code is worth the effort.

Not All Code Has Equal Value

The stark fact is that not all code is equally important. Some code will be used once or infrequently, some will be thrown out, and some will be drastically changed from its original form.

On the other hand, some code will be very important, some will be updated and evolved constantly, some will need to be very reliable, and some will need to be very secure.

At the outset of writing any piece of code, it’s extremely difficult to know if that code is worth the effort of automated tests. If you invest in automated tests (or automating anything) when the effort isn’t warranted, you will waste precious time that might be better spent elsewhere.

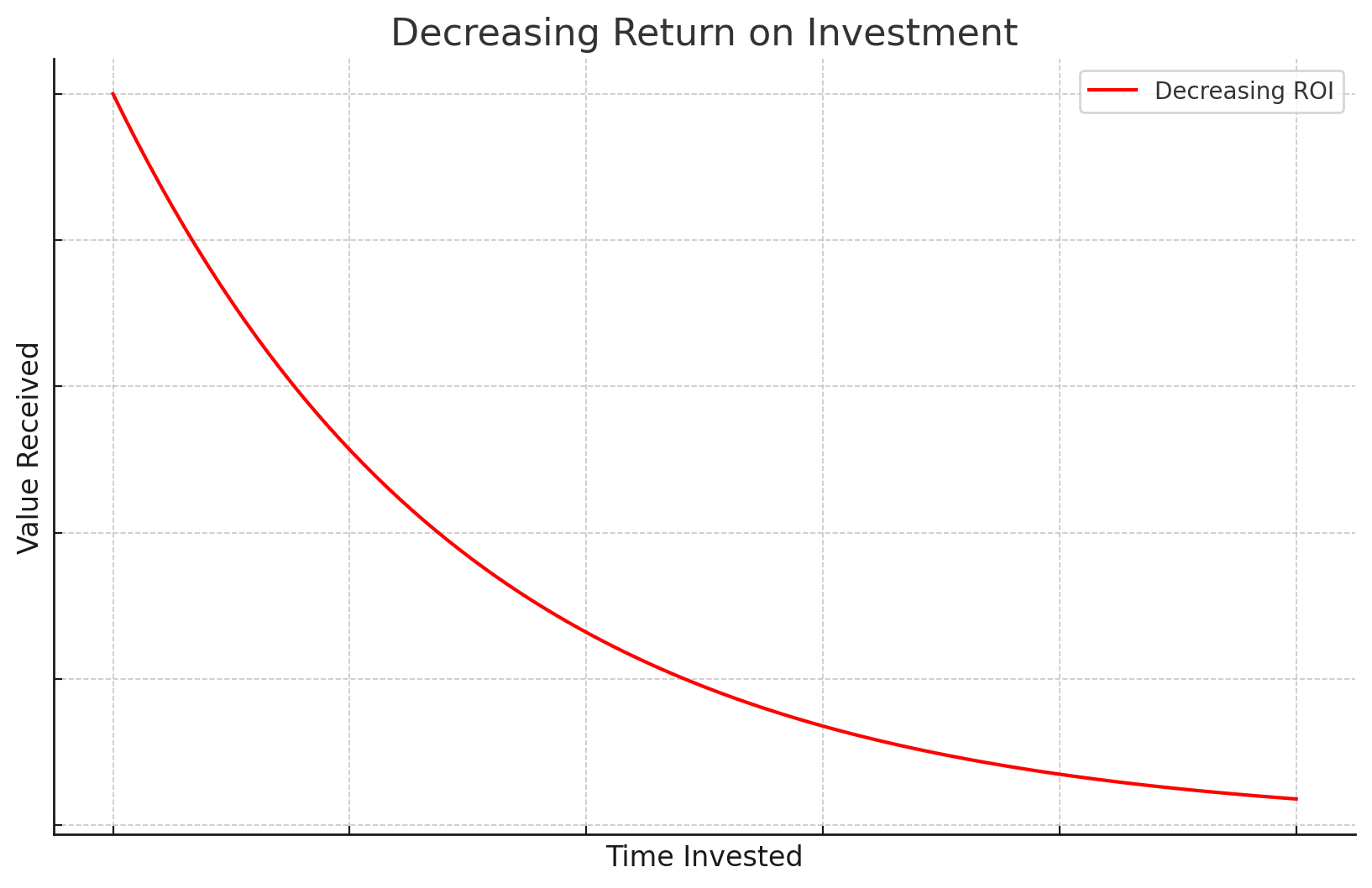

Using test-driven development (TDD) or otherwise aiming for 100% test coverage is a huge sacrifice in productivity because it treats all code as equally valuable. There is a diminishing return on investment for your effort. The more we push our testing to the extreme, the less value it yields.

The Single Best Way to Improve at Testing

I used TDD for many years before I realized I was spending way too much effort trying to test all the code, including the code that didn’t require that level of effort. Sometimes I still use TDD, but only for the most important code. I enjoy practicing TDD and it has helped build my testing discipline. I learned to create code that’s easier to test, but I don’t often do it now. It’s far too expensive for the resource-starved startups I’ve bootstrapped in recent years.

But TDD gave me what was probably my single most important lesson ever in coding and testing:

Think about testing before coding.

Any effort we make to visualize testing before coding results in code that is easier to test. Code that is easier to test is easier to keep working. Of course, this has nothing to do with TDD and we can easily fit thinking into our normal development process without TDD or even without any automated testing.

Effective JavaScript Testing Techniques

Following are some techniques for good testing with less effort.

You can find the set of example projects here.

| Technique | Usage | Description |

| Output testing | Manual or automated | Comparing previous output to latest output to see what has changed. |

| Visual testing | Manual or automated | Comparing before and after screenshots to see what has changed. |

| Manual testing, followed by automated testing | Automated | Testing manually to confirm the code works. Followed by automated tests. |

| Integration testing REST APIs | Automated | Applying automated testing to whole services. |

| Frontend testing with Playwright | Automated | Testing frontends using Playwright |

Output Testing

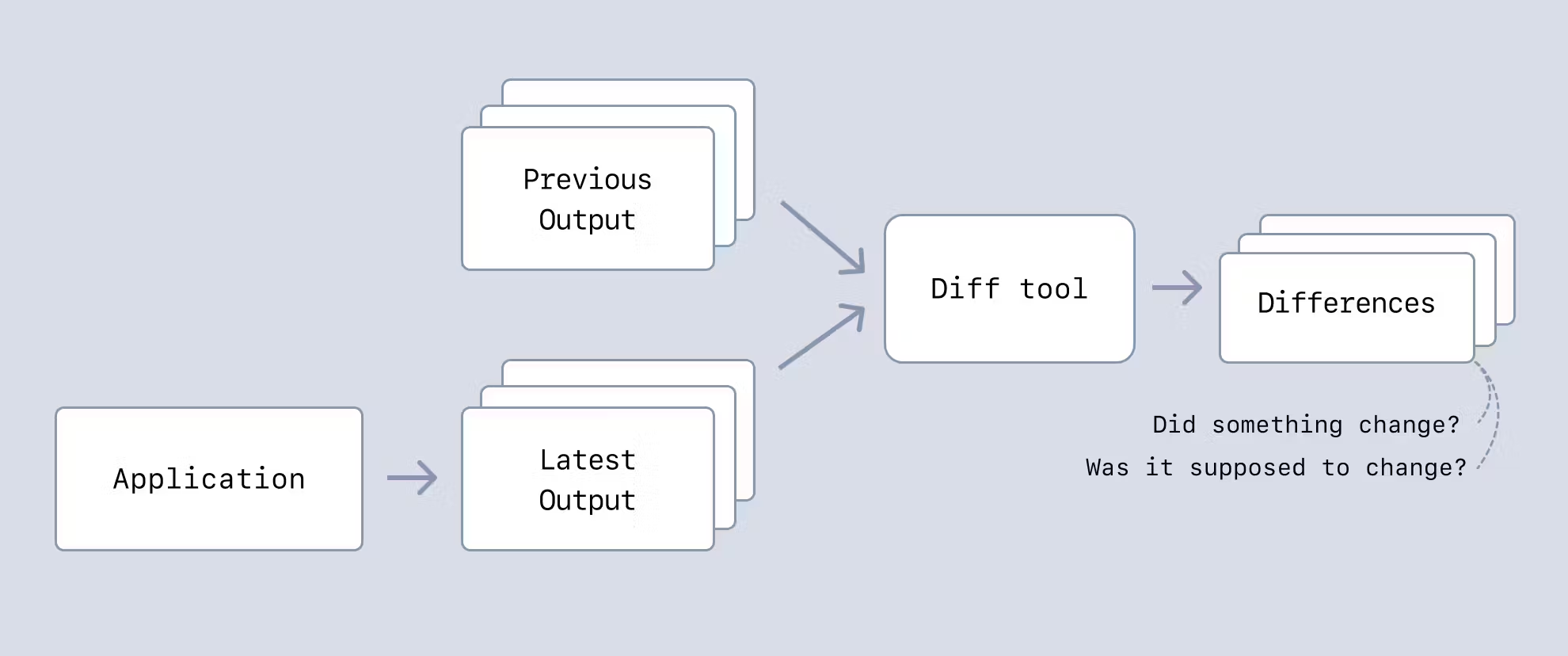

Like golden testing, output testing checks the output against the last known working version. We can easily use a Diff tool (e.g., git diff) to view the differences.

This is great for data pipelines (we diff the data that is output), but we can also use it to test behavior by including a log of important events in the output.

Output testing can be used manually (we can visually check the differences) or automatically (by having our CI pipeline fail if there are differences).

Output testing is simple to start using. It supports refactoring (any refactor should cause no difference in the output).

Visual Testing

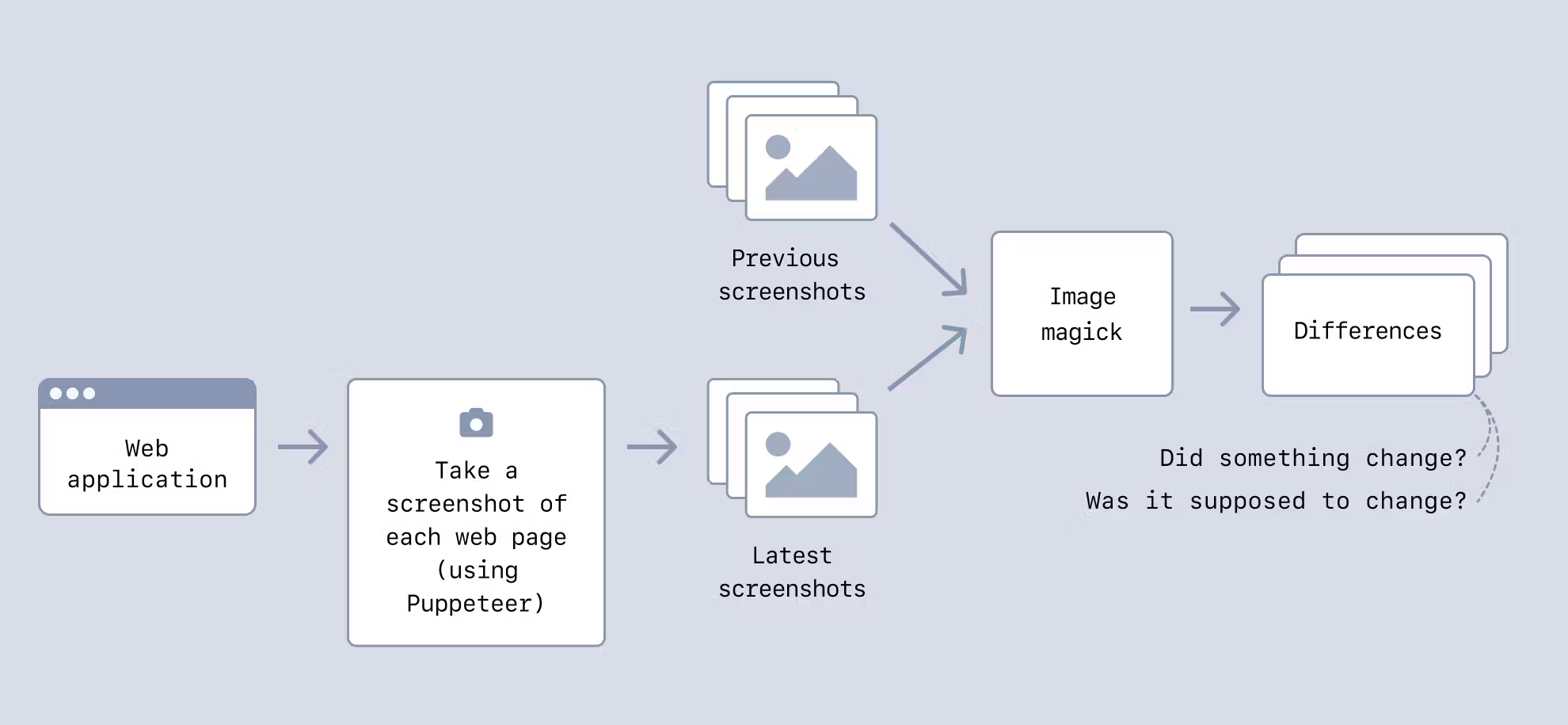

Like snapshot testing, visual testing compares the visual output (e.g., a screenshot) of our application against the last known working version.

This is more difficult than output testing, but it works well for checking that changes in our code make no (or little) difference to its visual output.

My code example uses Puppeteer to screenshot a web page and then ImageMagick to compare the screenshots. ImageMagick gives a metric indicating the amount of differences. This allows us to set a threshold and tune the system to tolerate small differences to a level that we can live with.

Visual testing can be used manually (we scan the differences with our eyes) or automatically (failing our CI pipeline when ImageMagic’s difference metric is above a certain threshold).

With a visual testing system in place, we can quickly scale it across 100s or 1,000s of web pages. It can take some time to run a big test suite, but it’s the fastest way to search for visual problems across many web pages.

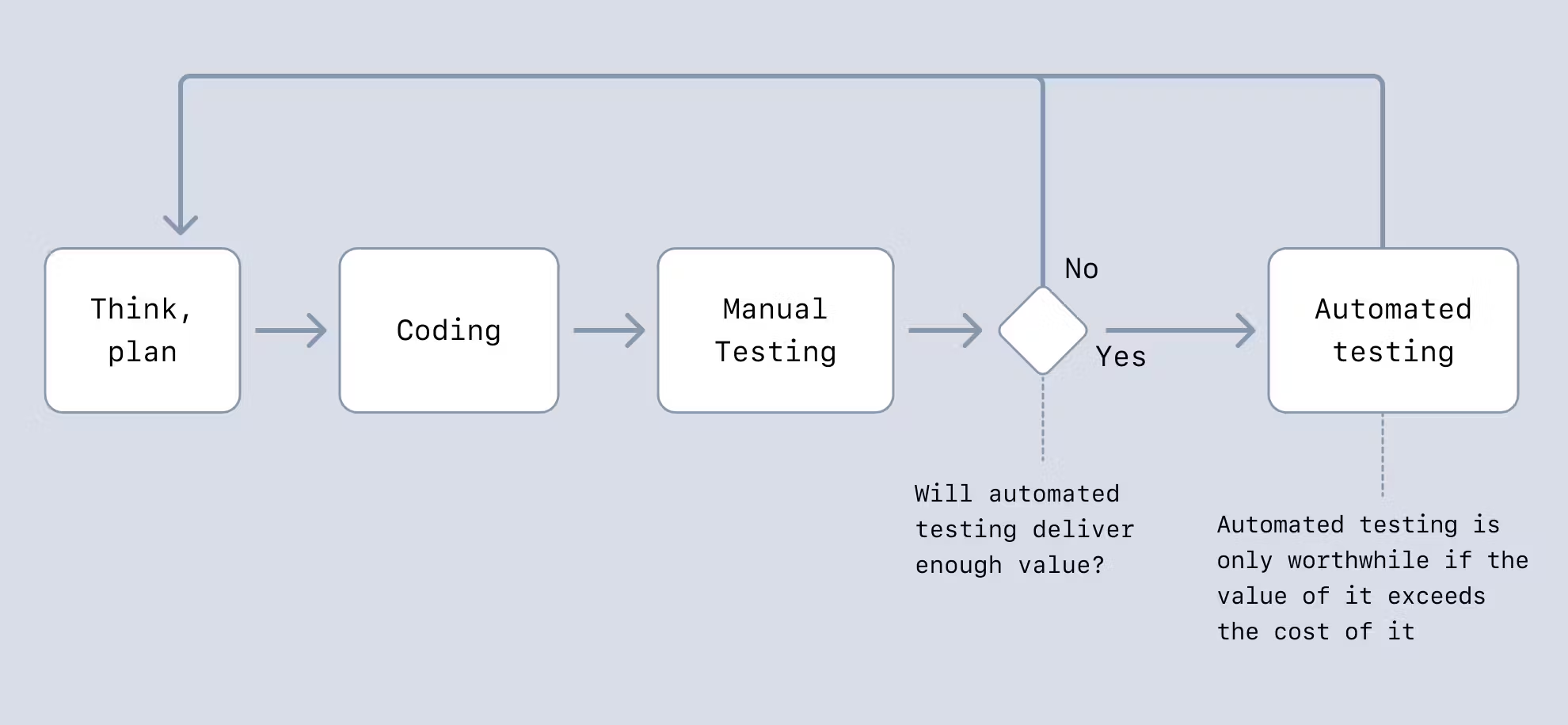

Manual Testing, then Automated Testing

We can get more effective results from traditional automated testing if we do manual testing followed up by automated testing.

This means we can go through multiple rounds of evolution and refactoring supported by manual testing (or output testing, as mentioned previously) before we commit to automated testing. This can save a lot of time because it’s very time-consuming to have to keep automated tests working while we are creating and evolving new code.

Creating automated tests becomes easy as well. We can use the captured output or code behavior for our automated tests. Fitting automated tests to the code (that we already know works) can be much easier than attempting to write tests from scratch.

Saving automated testing until later also allows for an informed decision about whether automated testing is actually necessary in each particular case.

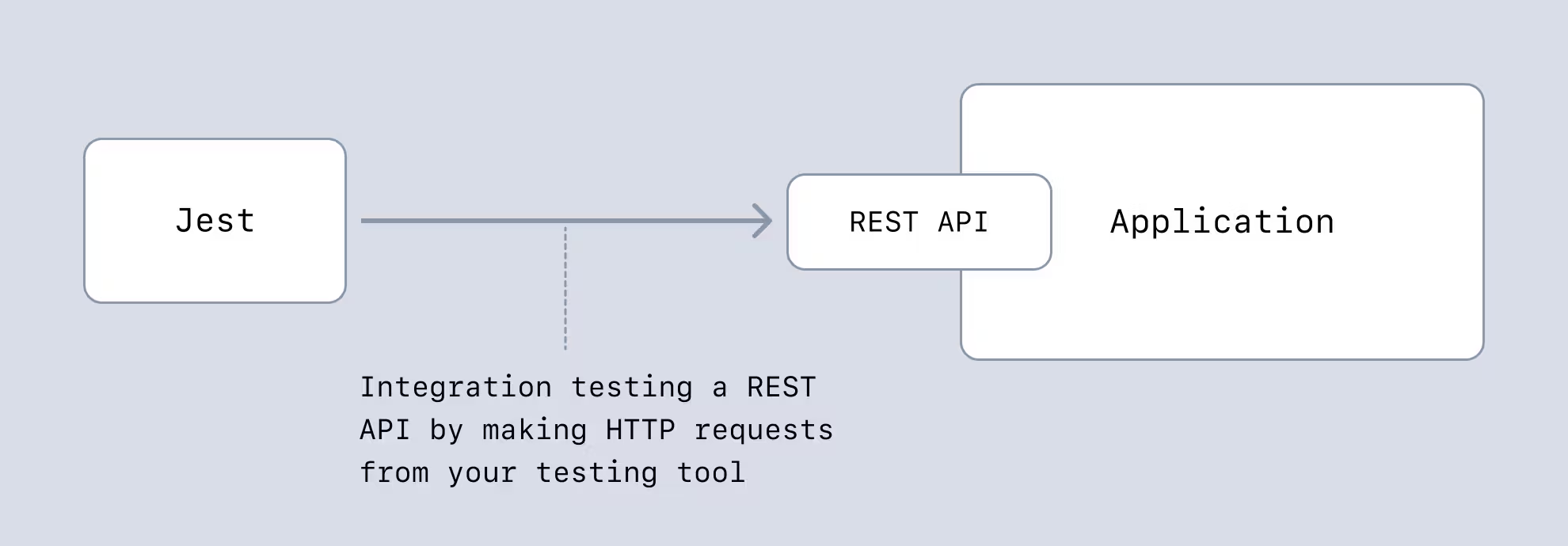

Integration Testing REST APIs

The most effective way to test REST APIs is to make HTTP requests against the entire service using a traditional automated testing framework (e.g., Jest).

This is much faster to implement than unit testing and much easier to keep working over time. We get to test more code with a smaller suite of tests.

We can also test other types of services in a similar way (for example, a microservice that accepts inputs from a message queue). In cases like this, we can feed input via messages instead of HTTP requests.

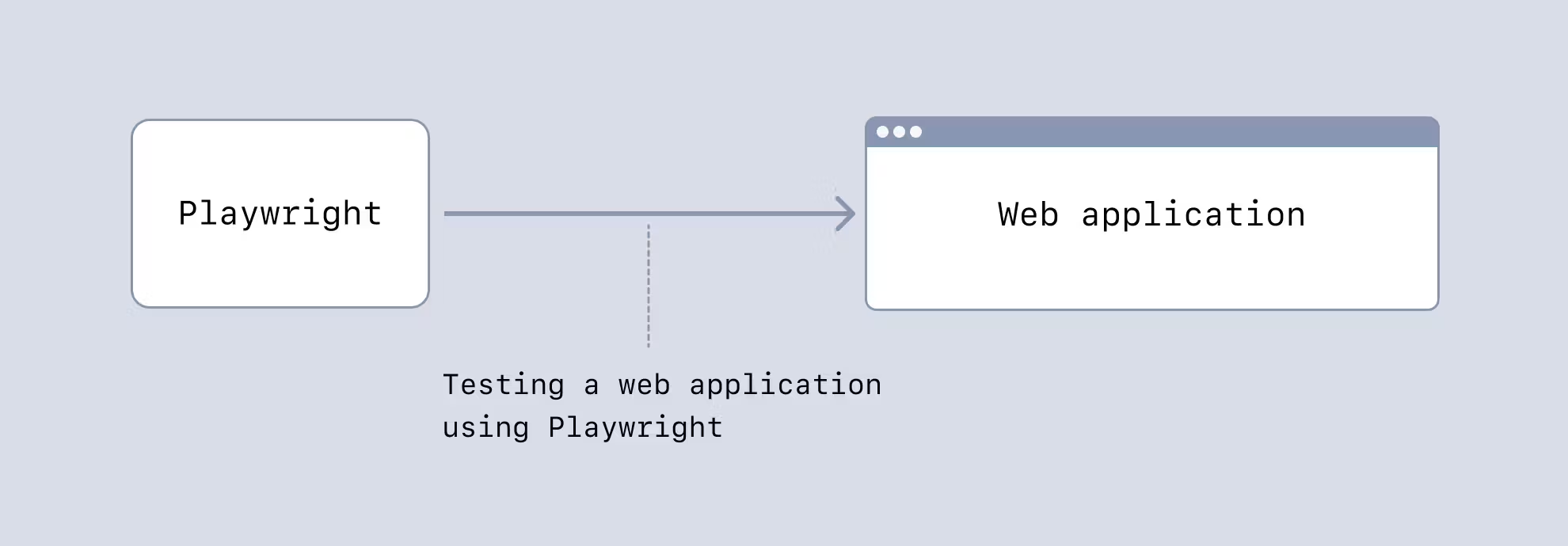

Frontend Testing with Playwright

Anyone who has tried to test UI components using traditional automated testing will tell you how painful it can be.

A more effective way of testing UIs is using a frontend testing framework like Playwright. We can do end-to-end testing of our whole system (frontend and backend), or we can completely mock the backend to focus on integration testing the frontend. This kind of testing covers the most ground for the least effort.

Wrapping Up

We all have to test our code. That’s the only way to ensure it’s fit for purpose.

But as we've seen in this post, we don’t have to labor on time-intensive traditional testing methods. There are more effective testing techniques out there that can save us so much time, and we can still have great tests. I encourage you to come up with testing techniques for your situation that need little effort.

The important thing is that we deliver valuable and reliable code to our customers. They don’t care how we build or test it, only that we give them something that works well and delivers the value they need.